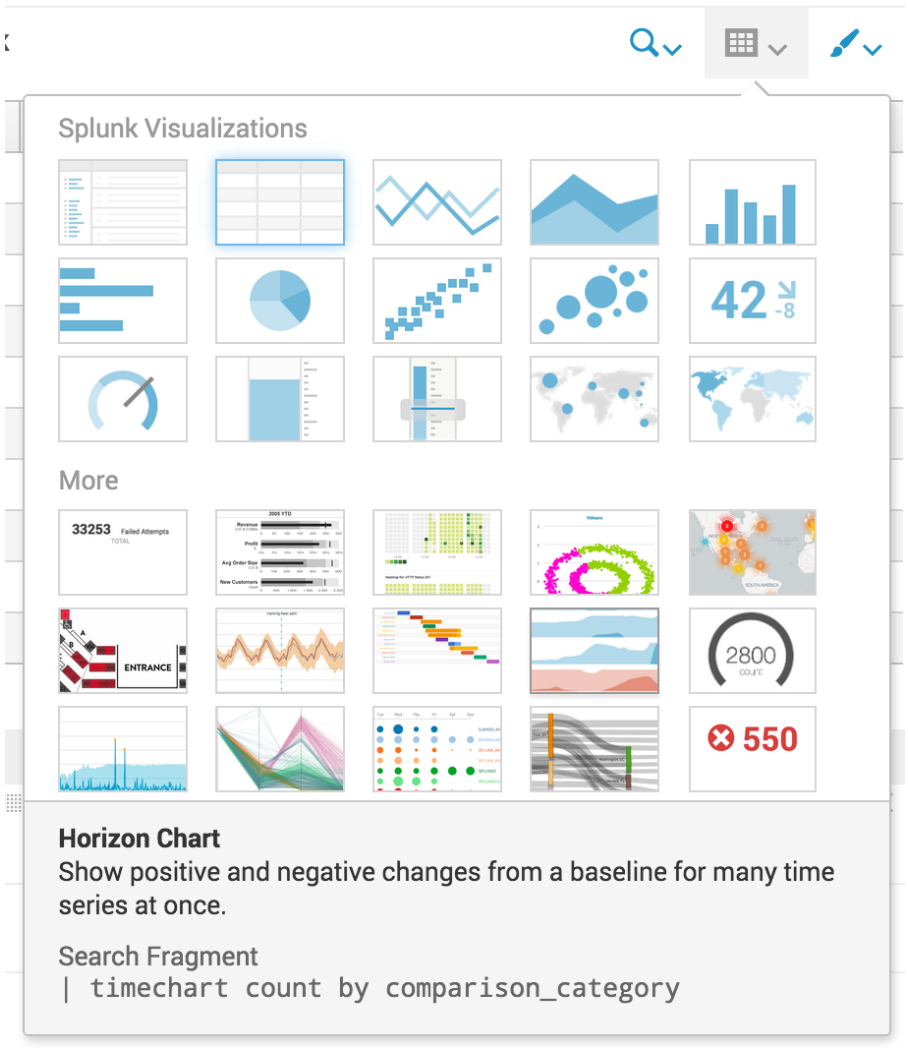

Visualizations are not new to Splunk, whether XML or (D3) JavaScript, but the visualizations offered in Splunk 6.4 are the easiest and most powerful yet!

Splunk has four large improvements to visualizations:

- 12 New D3 Visualizations

- The ability to add and extend your own visualizations to the library

- Developer APIs...